What is A/B Testing in Marketing: A Complete Guide to Data-Driven Optimization

Introduction

Marketing decisions have historically relied on intuition, experience, and educated guesses. While expertise remains valuable, data-driven decision making through A/B testing has transformed how successful marketers optimize campaigns, improve conversion rates, and maximize return on investment.

A/B testing also called split testing is the practice of comparing two versions of a marketing element to determine which performs better based on measurable data rather than assumptions. Whether testing email subject lines, landing page designs, ad copy variations, or call-to-action buttons, A/B testing removes guesswork from optimization.

The methodology is deceptively simple: show version A to half your audience and version B to the other half, measure which achieves better results, then implement the winner. Yet beneath this simplicity lies sophisticated statistical analysis, experimental design principles, and strategic thinking that separates effective testing from wasteful tinkering.

This comprehensive guide explains what A/B testing is, why it matters for marketing success, how to design and execute valid tests, common mistakes to avoid, and best practices for building testing into your marketing culture. Whether you're new to testing or looking to refine your approach, you'll gain practical frameworks for making data-driven marketing decisions.

What is A/B Testing? The Fundamentals

A/B testing is a randomized controlled experiment comparing two versions of a single variable to determine which produces better results against a specific goal metric.

Core Components

Control (A): The current or baseline version your existing email template, current landing page, original ad copy.

Variant (B): The challenger version with one specific change a different headline, modified button color, alternative image.

Random Assignment: Traffic or audience splits randomly between versions, ensuring each group is statistically similar.

Success Metric: The measurable outcome determining the winner click-through rate, conversion rate, revenue per visitor, email open rate.

Statistical Significance: Mathematical confidence that observed differences reflect true performance differences, not random chance.

How It Works in Practice

You're uncertain whether a red or blue call-to-action button will generate more clicks on your landing page. You create two identical pages except for button color. Traffic splits 50/50 randomly between versions. After collecting sufficient data, statistical analysis reveals whether one color genuinely performs better or if observed differences are just random variation.

If the blue button shows a statistically significant improvement say, 15% more clicks you implement it permanently. If results show no meaningful difference, you've learned button color doesn't impact performance for your specific audience and can focus testing efforts elsewhere.

Why A/B Testing Matters for Marketing Success

A/B testing delivers concrete business value through several mechanisms:

Eliminates Costly Assumptions: Marketing decisions based on "best practices" or gut feelings often fail because every audience is unique. Testing reveals what actually works for your specific situation.

Compounds Small Improvements: A 5% conversion rate improvement might seem modest, but compounded across multiple optimizations (landing page, email, ads), small wins accumulate into substantial business impact.

Reduces Risk: Major redesigns or campaign changes risk damaging performance. Testing new approaches with a portion of traffic before full implementation limits downside while exploring upside potential.

Provides Learning: Even "failed" tests teach you about your audience. Learning that emotional appeals outperform rational benefits, or that longer form content converts better than short, informs broader strategy.

Builds Confidence: Data-driven decisions create organizational alignment. Instead of debating opinions, testing provides objective evidence for strategic choices.

Increases ROI: Optimizing existing traffic generates better results without increasing advertising spend improving return on investment directly.

Real Business Impact Examples

A SaaS company testing landing page headlines increased trial signups 21% with a single change, generating $400,000 additional annual revenue from the same traffic volume.

An e-commerce retailer testing product page layouts improved conversion rate 12%, equivalent to $2 million in annual sales without increasing marketing budget.

An email marketer testing subject lines improved open rates 18%, significantly increasing campaign reach and engagement without sending more emails.

These aren't theoretical benefits A/B testing delivers measurable, attributable business improvements.

What Can You A/B Test in Marketing?

A/B testing applies across virtually every marketing channel and element.

Email Marketing Tests

Subject lines (length, personalization, emojis, urgency)

Preview text

Sender name

Email design and layout

Call-to-action placement and copy

Personalization strategies

Send times and days

Content length

Landing Page Tests

Headlines and subheadlines

Hero images or videos

Form length and fields

Button copy and design

Social proof elements

Trust signals and guarantees

Page layout and structure

Content length

Paid Advertising Tests

Ad copy and headlines

Images or video creative

Call-to-action text

Audience targeting parameters

Ad placement

Landing page destinations

Website Tests

Navigation structure

Homepage design

Product page elements

Checkout process steps

Pricing display

Value proposition messaging

Social Media Tests

Post copy variations

Image types (photos vs graphics)

Posting times

Hashtag strategies

Call-to-action approaches

The key is testing elements that plausibly impact your goal metric. Testing button corner radius likely won't move conversion rates significantly. Testing value proposition messaging might.

How to Design a Valid A/B Test

Proper experimental design separates meaningful tests from inconclusive experiments.

Step 1: Define a Clear Hypothesis

State what you're testing and why you believe one version will outperform.

Bad hypothesis: "Let's see if a different headline works better"

Good hypothesis: "A benefit-focused headline will increase signups 10%+ versus our current feature-focused headline because our audience research shows they care more about outcomes than capabilities"

Strong hypotheses explain the change, predicted outcome, and reasoning based on data or insights.

Step 2: Select One Primary Metric

Choose the metric that matters most for this test conversion rate, revenue per visitor, click-through rate, signup rate.

Secondary metrics provide context but don't determine the winner. If you're optimizing for conversions, the version that converts better wins even if engagement metrics like time on page differ.

Step 3: Change Only One Variable

Test one element at a time. If you change both headline and image simultaneously, you can't attribute performance differences to either specific element.

This single-variable rule enables clear learning. You know exactly what caused performance changes.

Step 4: Determine Required Sample Size

Statistical validity requires sufficient sample size. Testing with 50 visitors per variation won't yield reliable conclusions.

Use an A/B test sample size calculator (many free ones exist online) inputting:

Current baseline conversion rate

Minimum detectable effect (smallest improvement you care about)

Statistical confidence level (typically 95%)

Statistical power (typically 80%)

The calculator determines how many conversions per variation you need before declaring a winner.

Step 5: Set Test Duration

Run tests long enough to account for day-of-week and time-of-day variations. Minimum one full week is typically recommended, often 2-4 weeks for sufficient data.

Don't stop tests early just because one version is "ahead." Statistical significance requires the predetermined sample size, not premature judgment.

Step 6: Ensure Proper Traffic Split

Most testing tools handle this automatically, but verify traffic splits randomly and evenly (typically 50/50, sometimes 90/10 for very risky tests).

Random assignment is critical. If the control gets morning traffic and variant gets afternoon traffic, external factors confound results.

Interpreting A/B Test Results

Understanding whether results are meaningful requires statistical analysis, not just comparing raw numbers.

Statistical Significance

If Variant B shows 5.2% conversion versus Control A's 5.0% conversion, is that meaningful or random noise?

Statistical significance testing (typically chi-square tests for conversion data) calculates the probability that observed differences occurred by chance. The standard threshold is 95% confidence meaning less than 5% probability the difference is random.

Most A/B testing platforms calculate this automatically, showing "statistical significance achieved" when the threshold is reached.

Practical Significance

Even statistically significant results might not matter practically. A landing page test showing statistically significant 0.1% conversion improvement (from 2.0% to 2.1%) might not justify the effort of implementing the change.

Consider business impact. Does the improvement warrant implementation effort? For high-traffic pages, tiny improvements compound into significant revenue. For low-traffic pages, pursue larger wins.

Confidence Intervals

Rather than just "Variant B is better," confidence intervals show the range of likely true performance. "Variant B is 10-15% better with 95% confidence" provides richer information than "Variant B wins."

Test Validity

Before trusting results, verify test integrity

Was sample size sufficient?

Did test run long enough?

Did traffic split randomly and evenly?

Were external factors constant (no major holidays, site outages, etc.)?

Did you avoid peeking and stopping early?

Invalid tests produce unreliable results worse than no test at all.

Common A/B Testing Mistakes to Avoid

Even experienced marketers make testing errors that invalidate results or lead to wrong conclusions.

Mistake 1: Testing Too Many Variables

Changing multiple elements simultaneously prevents knowing which caused performance differences. Test headlines separately from images, form fields separately from button color.

Mistake 2: Insufficient Sample Size

Stopping tests too early or with too little traffic produces unreliable results. Let tests run to statistical significance with predetermined sample sizes.

Mistake 3: Peeking and Stopping Early

Checking tests repeatedly and stopping when one version is ahead creates false positives. Commit to predetermined sample size and duration.

Mistake 4: Testing Insignificant Elements

Button corner radius or hover effects rarely impact conversion rates meaningfully. Test elements that plausibly affect user behavior headlines, value propositions, form complexity.

Mistake 5: Not Having a Hypothesis

Random testing without hypotheses wastes time. Informed hypotheses based on data or insights increase learning from every test.

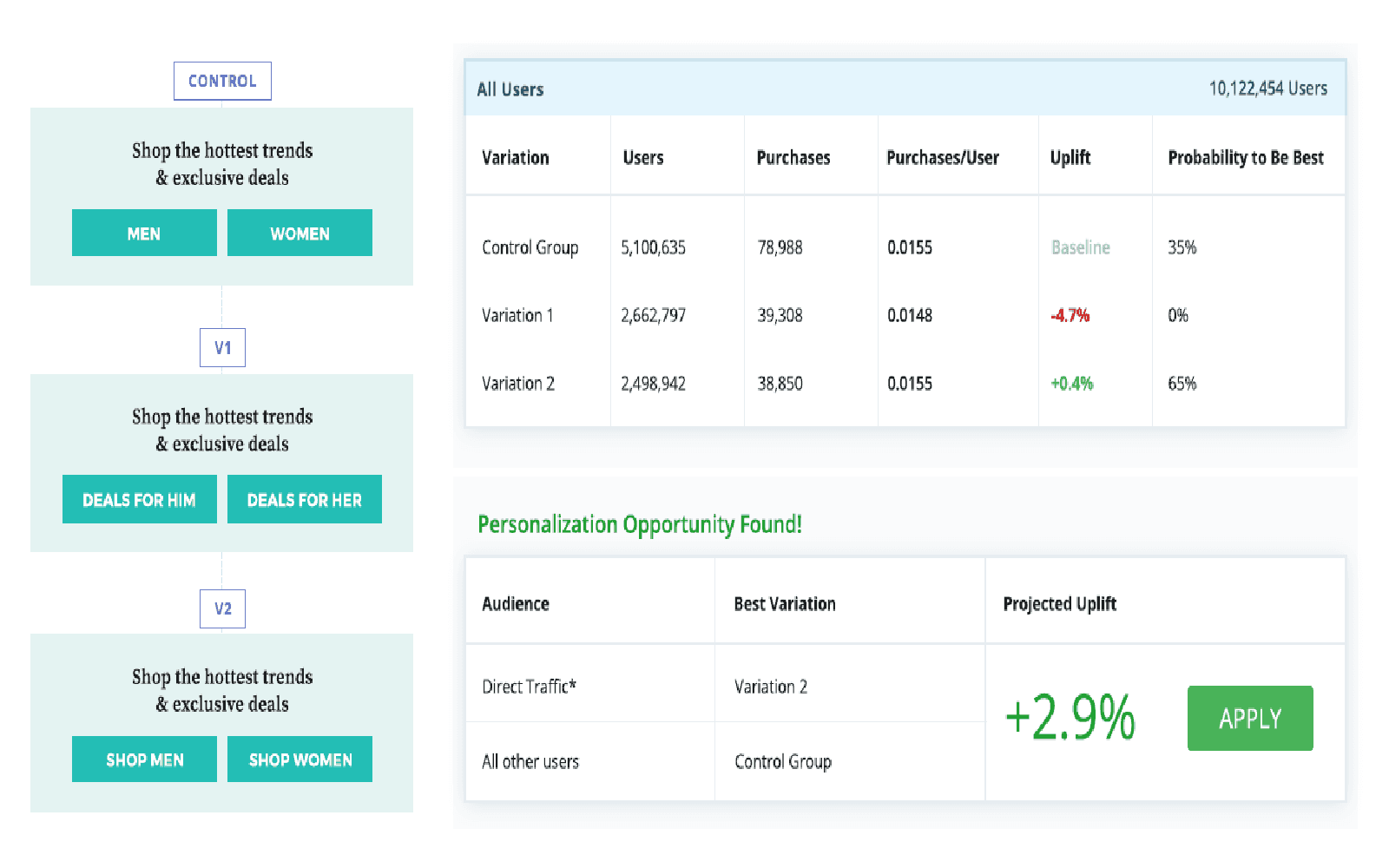

Mistake 6: Ignoring Segmentation

One variation might perform better overall but worse for a valuable segment. Analyze results by traffic source, device type, new vs. returning visitors to understand nuanced performance.

Mistake 7: Not Documenting and Learning

Test results should inform future decisions. Maintain a testing log documenting hypotheses, results, learnings, and applications to build institutional knowledge.

Mistake 8: Declaring Winners Too Quickly

Week-over-week performance fluctuates naturally. Ensure tests run through complete weeks and reach statistical significance before implementation.

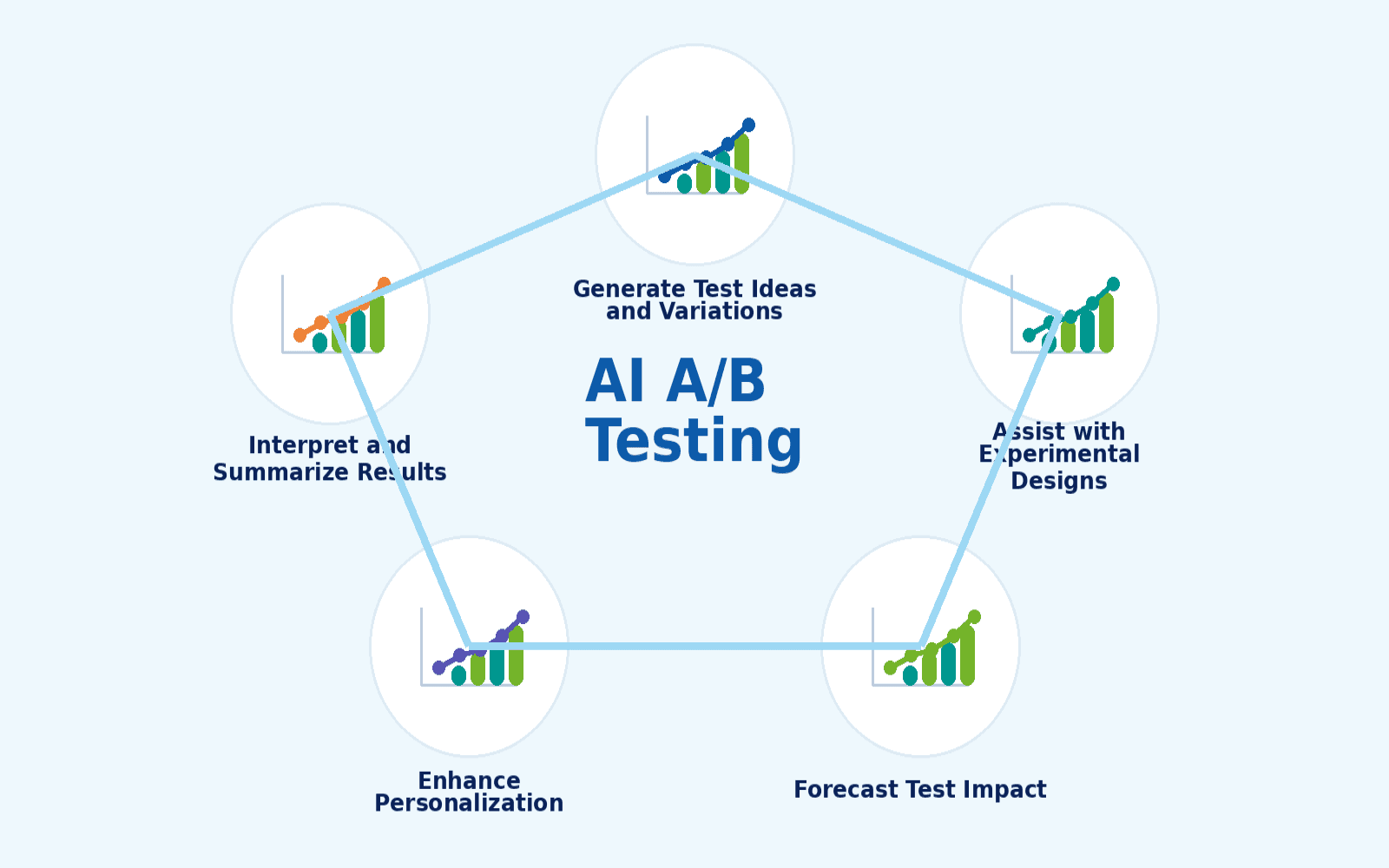

Advanced A/B Testing Concepts

Beyond basic testing, advanced techniques increase efficiency and learning.

Multivariate Testing (MVT)

Test multiple variables simultaneously to find optimal combinations. Instead of testing headline separately from image, MVT tests all combinations (Headline A + Image 1, Headline A + Image 2, Headline B + Image 1, Headline B + Image 2).

MVT requires substantially more traffic than A/B tests but finds interactions between variables. Use when you have high traffic and want to test combinations.

Sequential Testing

Rather than fixed-duration tests, sequential testing uses Bayesian statistics to declare winners as soon as statistical confidence is achieved. This can shorten test duration but requires careful implementation to avoid false positives.

Personalization Testing

Test different content for different audience segments simultaneously. New visitors might see different value propositions than returning customers, with each segment's version optimized separately.

Bandit Algorithms

Multi-armed bandit testing automatically shifts traffic toward better-performing variations during the test, reducing the cost of showing losing variations. This balances learning (exploring variations) with earning (maximizing conversions).

These advanced techniques suit high-traffic situations or sophisticated testing programs. Start with standard A/B testing before advancing to complex methodologies.

Building a Testing Culture

One-off tests provide limited value. Systematic, ongoing testing compounds improvements over time.

Elements of Testing Culture

Curiosity Over Certainty: Question assumptions and defaults. "We've always done it this way" signals testing opportunity, not wisdom.

Data Over Opinions: Replace debates about what "should" work with tests revealing what actually works.

Learning from Failure: Celebrate tests that disprove assumptions. Learning what doesn't work is as valuable as finding what does.

Documentation: Maintain testing roadmaps, results logs, and learnings databases that build institutional knowledge.

Prioritization: Test highest-impact opportunities first. Traffic, conversion value, and baseline performance determine priority.

Systematic Process: Establish repeatable testing processes hypothesis development, test design, implementation, analysis, learnings documentation.

Cross-Functional Collaboration: Testing succeeds when marketing, design, development, and analytics collaborate throughout the process.

Resource Allocation: Budget time and resources for testing as part of regular marketing operations, not occasional special projects.

Companies with mature testing cultures run dozens of tests quarterly, continuously improving performance across channels.

Frequently Asked Questions

How long should I run an A/B test? Minimum one full week to account for day-of-week variations, often 2-4 weeks to reach statistical significance. The test should run until you achieve the predetermined sample size, not simply until one version is "winning."

What tools do I need for A/B testing? Basic testing requires analytics (Google Analytics) and testing software (Google Optimize, Optimizely, VWO, or platform-built-in testing like Mailchimp for email). Advanced testing benefits from statistical analysis tools and documentation systems.

Can I run multiple tests simultaneously? Yes, on different pages or with different audience segments. Avoid testing the same traffic on overlapping elements (e.g., homepage headline and homepage form simultaneously) as this creates interactions that confound results.

What's a good conversion rate improvement to target? This depends on baseline performance and traffic volume. High-traffic sites might aim for 5-10% improvements. Lower-traffic situations might only detect 20%+ changes reliably. Focus on practical significance over arbitrary improvement targets.

Should I test with 50/50 or 90/10 traffic splits? 50/50 splits reach statistical significance fastest. 90/10 splits reduce risk when testing radical changes on high-stakes pages, but require much longer test duration to achieve significance.

How do I know if my test results are trustworthy? Verify: sufficient sample size was reached, test ran minimum duration (1-2 weeks), traffic split randomly and evenly, no major external factors occurred during testing (holidays, site issues), and statistical significance was achieved per your testing tool's calculations.

Conclusion

A/B testing transforms marketing from an art of educated guessing into a science of continuous, data-driven improvement. By systematically comparing variations and measuring actual performance rather than assuming what works, marketers optimize conversion rates, improve ROI, and make confident strategic decisions.

The methodology is straightforward compare two versions, measure results, implement the winner. However, meaningful testing requires understanding statistical validity, designing proper experiments, avoiding common pitfalls, and building systematic testing processes into marketing operations.

Start with high-impact opportunities: landing pages with significant traffic, email campaigns with large audiences, or paid advertising with substantial spend. Form clear hypotheses based on data or insights, test one variable at a time, let tests run to statistical significance, and document learnings to inform future decisions.

The businesses that excel at A/B testing share common characteristics: curiosity about what actually works, commitment to data over opinions, systematic testing processes, and patience to let tests conclude properly. They view testing not as occasional experiments but as fundamental to how they optimize marketing performance.

Begin your A/B testing journey with a single test on a high-traffic page or campaign. Follow proper experimental design, let it run to completion, analyze results thoughtfully, and document learnings. That first successful test often catalyzes broader testing adoption as teams experience the clarity and confidence data-driven decisions provide.

Marketing success increasingly belongs to organizations that test systematically, learn continuously, and optimize relentlessly. A/B testing provides the framework for achieving all three, transforming marketing from hopeful broadcasting into strategic, measurable, continuously improving systems that drive predictable business growth.

Timeframe

2022 - 2023

Client

Escoba Inc.